Master Thesis

Hand mesh reconstruction from poses using

spiral convolution in Unreal Engine

My master’s thesis is centered around the topics of simulation, virtual reality, and machine learning. Goal of the thesis is to construct a 3D mesh of a hand from a pose using a neural network. The trained network is integrated into the Unreal Engine, to be used in combination with real-time hand tracking.

The wrote this thesis at the University of Bremen, in the workgroup for Computer Graphics and Virtual Reality (CGVR) under Prof. Dr. Gabriel Zachmann. Janis Roßkamp advised me on the process, Dr. Thomas Röfer is my second supervisor.

Full-Text and Ideas

The title of the master thesis might sound daunting, but here I try to explain the topic and my approach in a more straight forward way. The focus is not on the theoretical part, but on the implementation and practical parts.

If you are interested in the theoretical part and the whole process, please check the thesis itself:

Abstract

A condensed overview of the thesis

The virtual reality field is continuously developing with recent advances in entertainment, medicine, academia, and business in general. The representation of virtual avatars thereby becomes as important as never before. There is a special focus on the visualization of hands, as they are in view most of the time, are our main tool for interaction with objects, and are vital for non-verbal communication. However, their high degree of complexity makes them hard to simulate realistically. A way to create anatomically realistic hands quickly is in demand.

This thesis presents the implementation, training, and deployment of a neural network for the construction of 3D hand meshes from poses in Unreal Engine 5. The approach is based on a variation of the spiral convolution, a method to establish a local neighborhood of vertices on the surface of a mesh. These vertices form a kernel on which a convolutional neural network can operate. This allows the creation of fast neural networks that operate on a 3D mesh. I use this functionality to train the network on a realistic hand model generated with the NIMBLE project. The trained network is deployed in Unreal Engine 5, which, in combination with a Leap Motion Controller, enables real-time hand tracking and construction with the detail level of NIMBLE hands.

A spiral convolution approach was implemented and compared to a previous variant. In a hyperparameter study, different architectures and approaches were tested before training a network that constructs the 3D mesh directly from the hand pose. Visual comparison with ground truth data shows an excellent approximation of the original NIMBLE model in a fraction of the time. Construction of NIMBLE-like models in real-time is thereby made possible.

Overview

An introduction to the idea behind the thesis

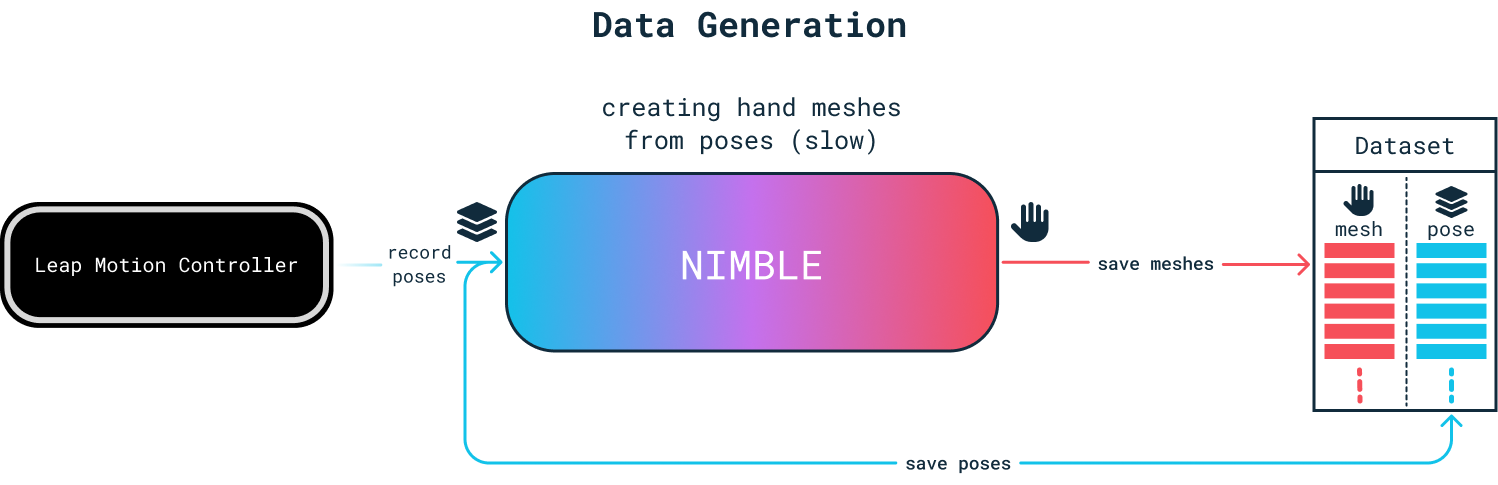

The idea this thesis follows is to train a neural network that can simulated the NIMBLE hand model very quickly.

To achieve this, I first create a dataset of poses by recording my hand with the Leap Motion Controller. I utilize NIMBLE to create a realistic mesh of a hand with the given pose.

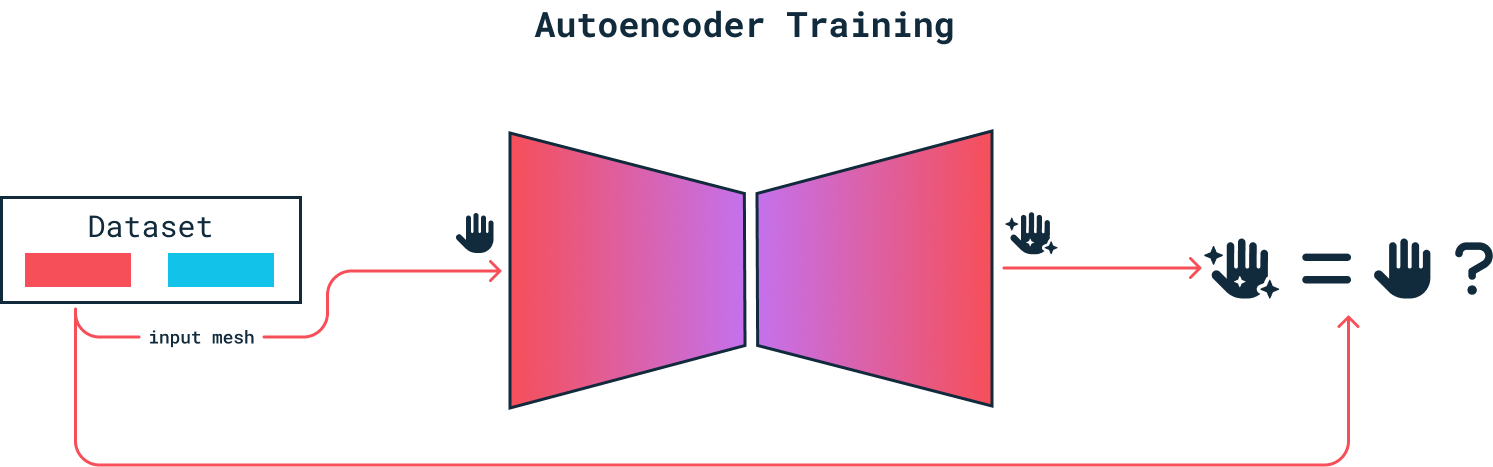

Next, I train an autoencoder structure, which implements the spiral convolution approached discussed in the thesis. This way, the network can learn the key characteristics of a hand and its pose, the latent representation.

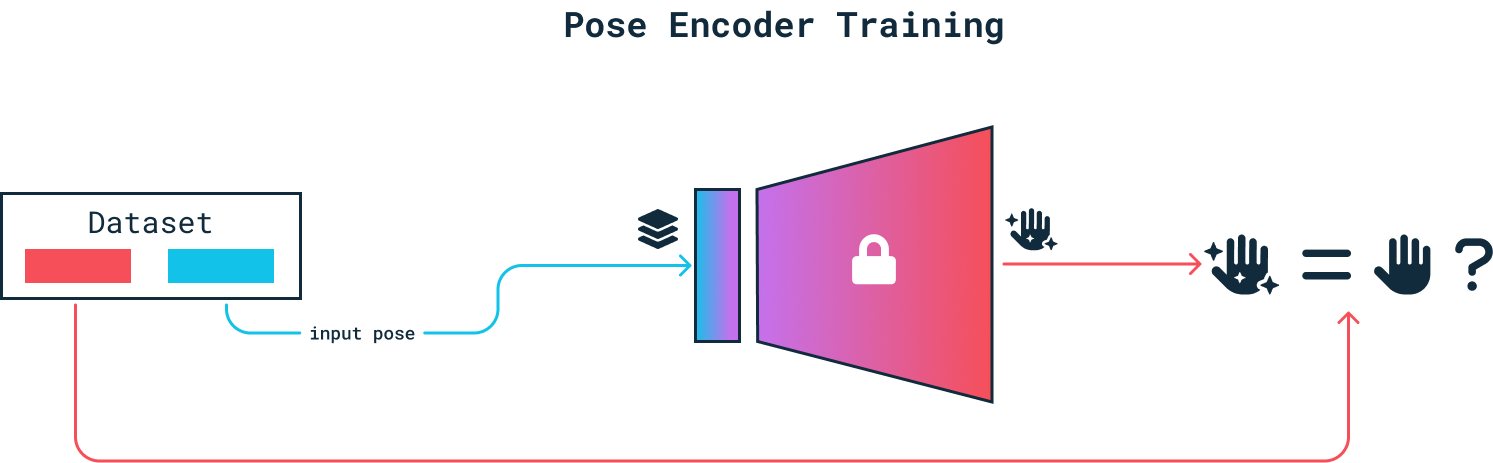

To train a model that is able to output a 3D mesh of a hand based on a pose, I remove the encoder of the autoencoder and replace it with a small, fully connected encoder. It receives the pose as an input and learns to translate it into the latent representation of the hand. The decoder functions as a generator from the latent space to the 3D mesh. As it is already trained, the decoder’s weights are locked.

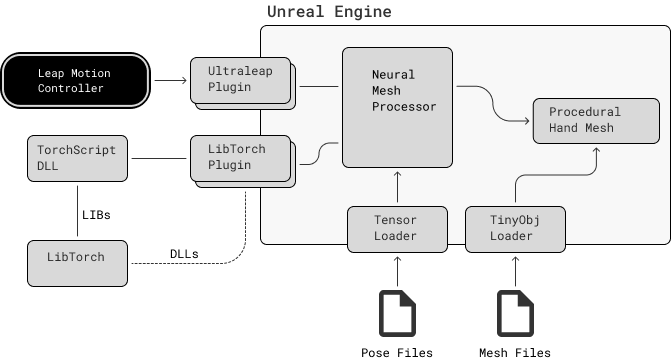

Unreal Integration

How to execute the trained model in the Unreal Engine 5

I first serialize the trained model using TorchScript. The PyTorch C++ library, is used for integrating the serialized neural network into Unreal Engine. A custom DLL is used for a simple wrapper that can load and execute the serialized version of the model.

By adapting a plugin from NeuralVFX’s basic LibTorch plugin, that leverages LibTorch, the project is able to communicate with the DLL and load and execute the neural network model directly within Unreal Engine.

The output is passed to a procedural mesh, which is able to visualize the output of the normalized mesh.

Results

Overview of the visual results of the hand construction

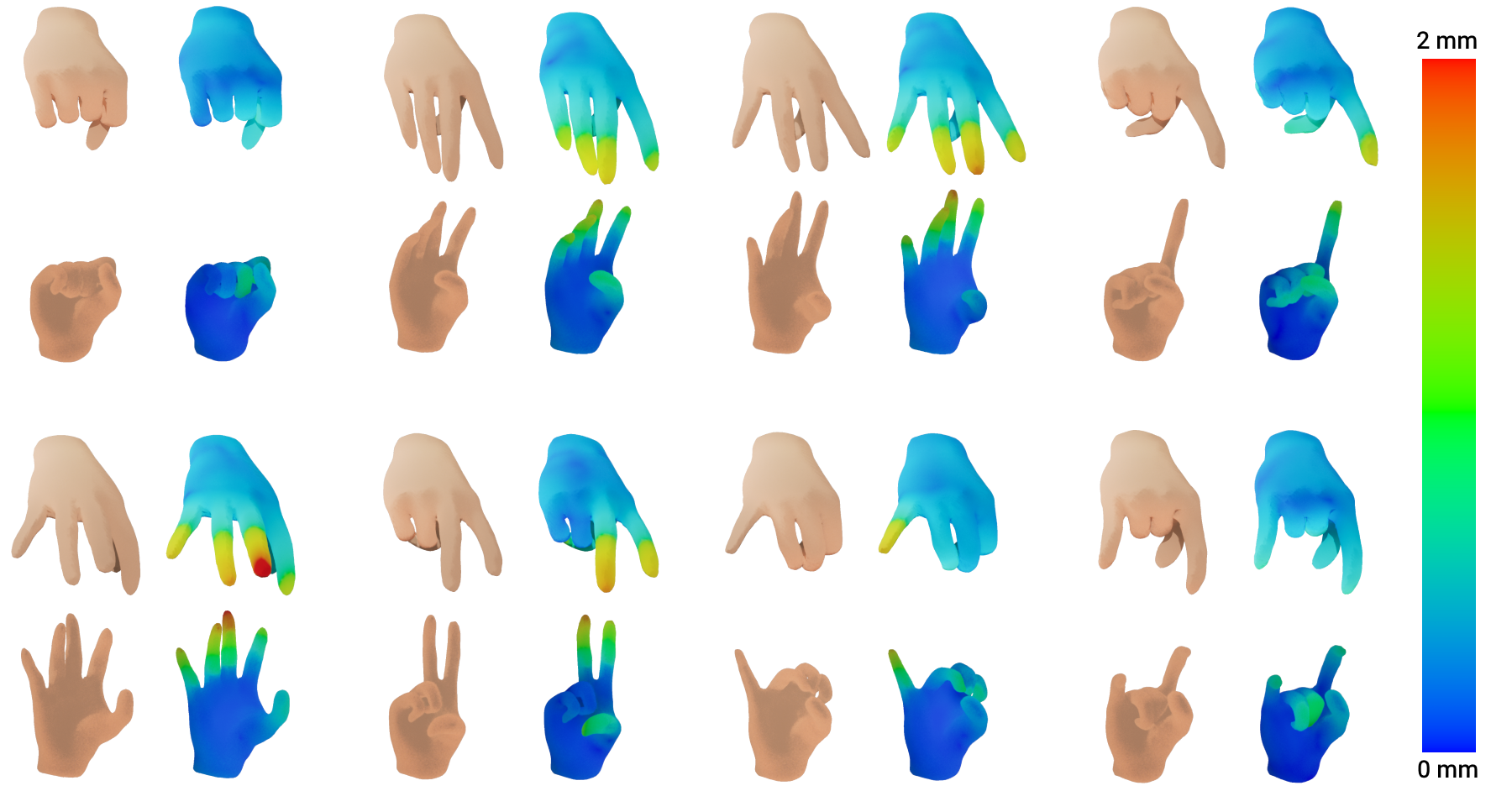

A comparison of eight poses from the test data of the LEAP dataset is shown.

Each example consists of two viewpoints that show the ground truth mesh and the generated mesh: a top view and a front view. The gradient of the generated mesh shows the vertex distance as described above.

All poses are very close to the ground truth mesh, showing a good construction from the poses.

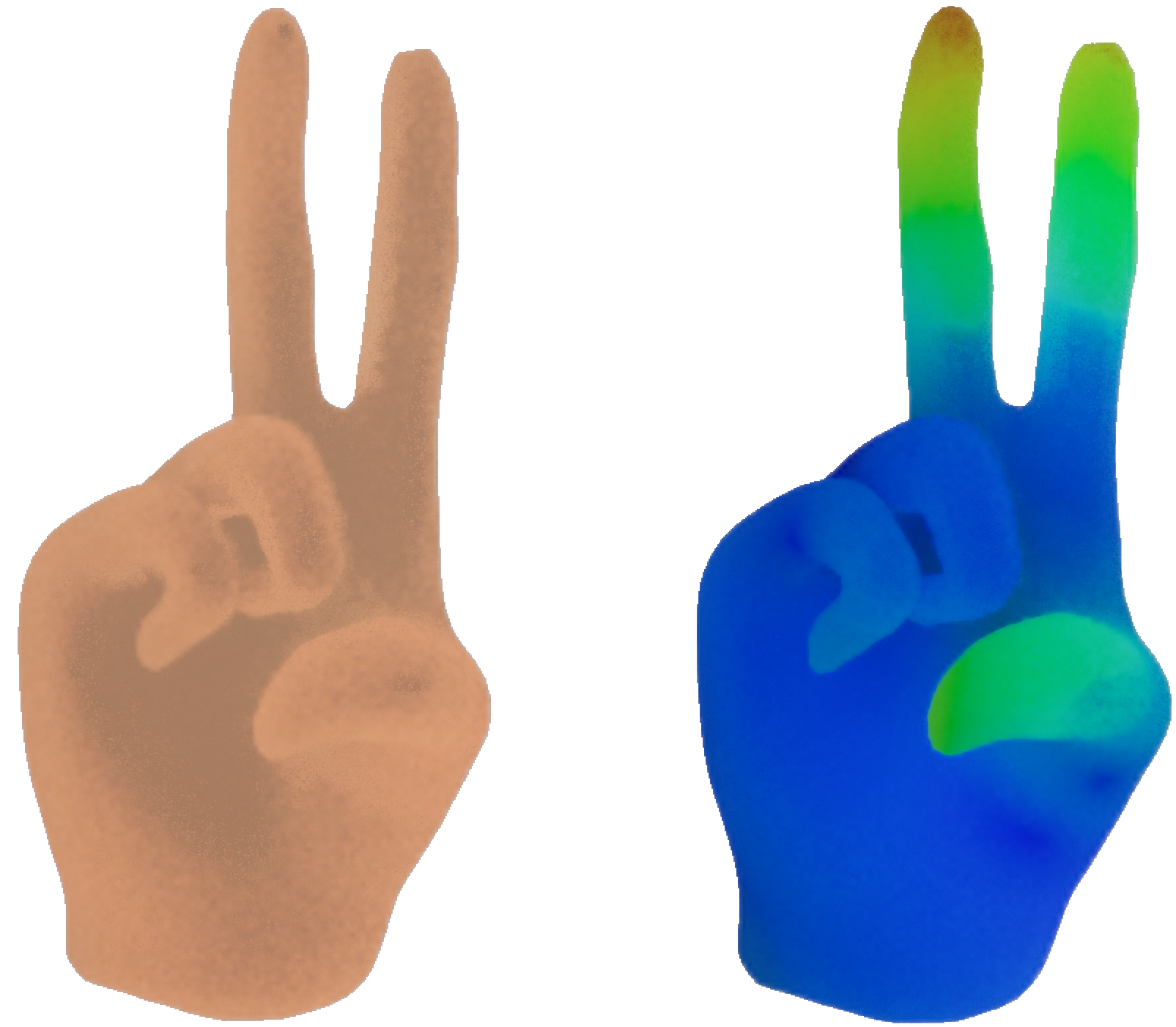

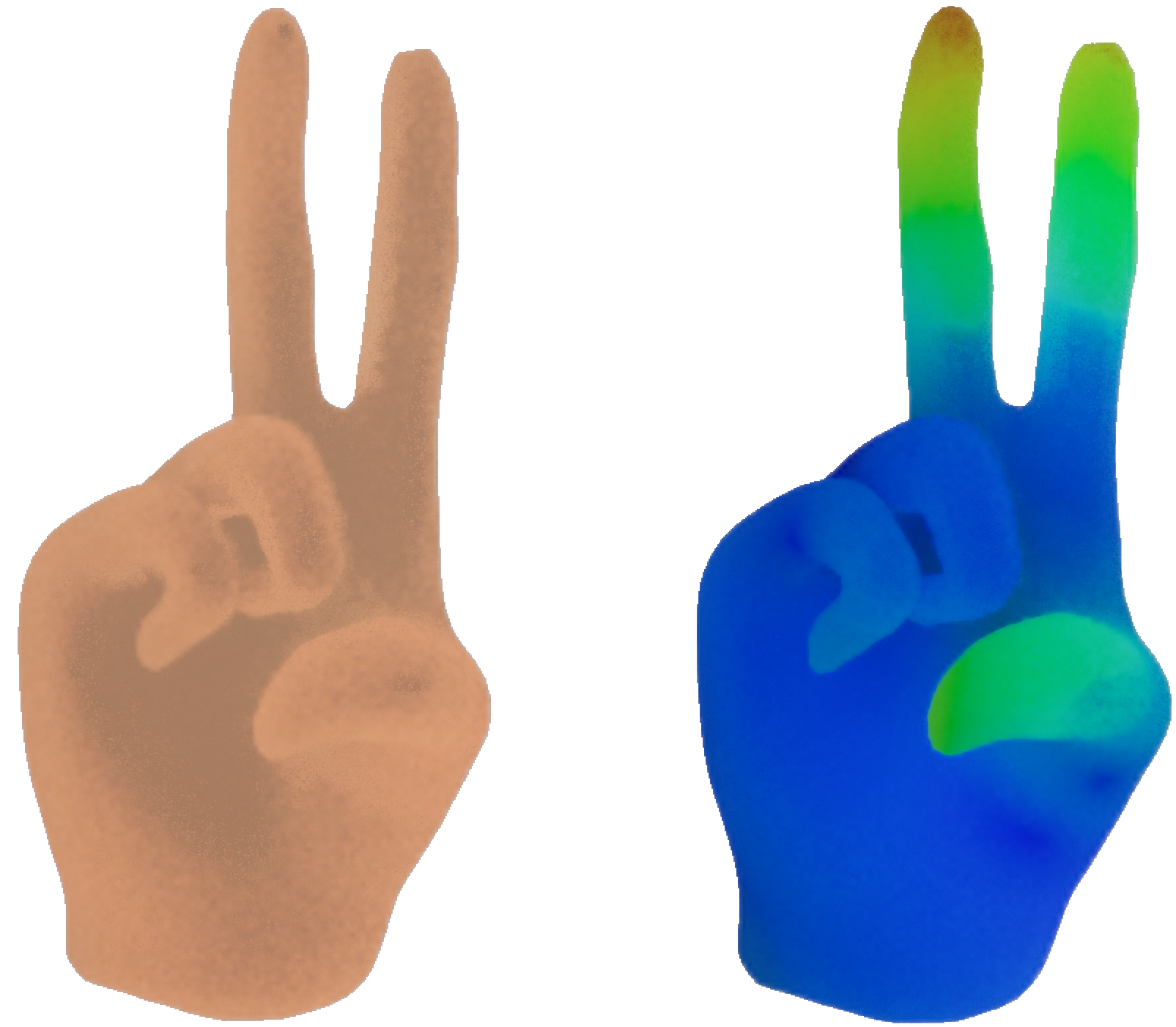

Here, a comparison between the ground truth of a hand and the mesh generated from its pose with the new convolution approach can be seen.

The hand is showing the peace sign. The ground truth does not have a texture but a simple color. The mesh generated from the pose is colored according to its vertex distance, with a small vertex distance colored in blue and large vertex distances in red.

Errors tend to increase to the fingertips and at the thumb, especially for extended fingers. The thumb is curved slightly downwards and does not touch the ring finger.

Overall, the generated mesh is very close to the ground truth and the original pose can be easily understood.

The review of this pose encoder model shows it’s very fast and mostly spot on, even though it has some trouble with tricky finger poses. Adding more varied poses to the training could smooth out these small kinks. Overall, it does a great job at mimicking hand movements, making it an interesting tool for realistic simulations.